AVM Contributor Agent Pt 2 — upgrade testing

A mix of AI and deterministic automation to help AVM contributors

I’m building an agent in the open to help automate open-source contributions for Azure Verified Modules (AVM).

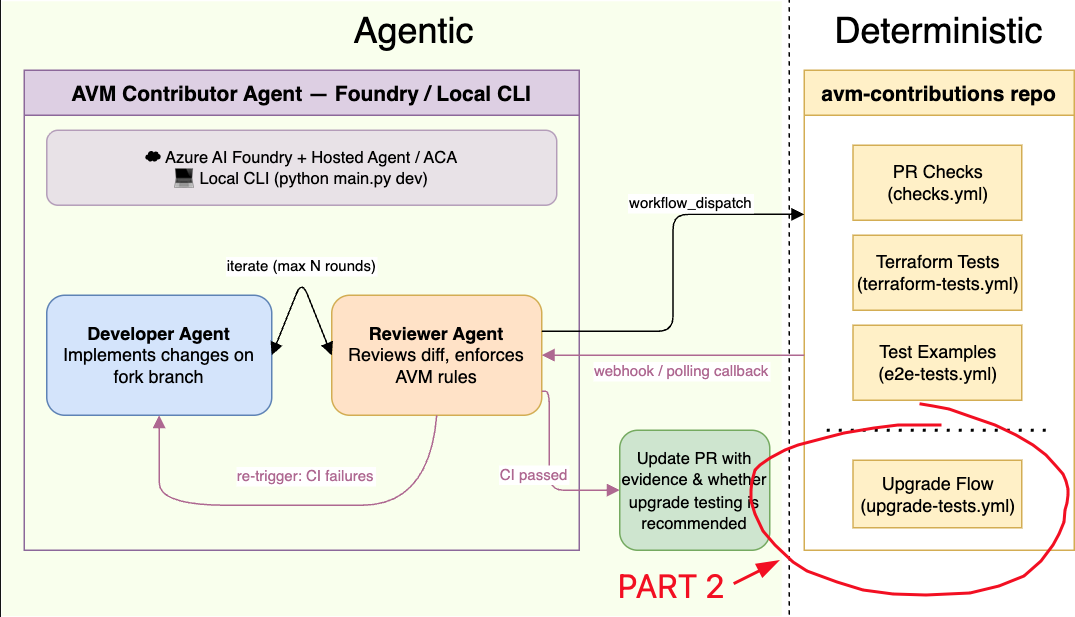

This is part 2. In this part we focus on upgrade testing, using deterministic CI. Part 1 has the background, if you need to catch up.

This illustrates an important design consideration when working with AI - do not use AI inference for tasks that can be simply accomplished by deterministic automation.

What problem does it solve?

The upgrade-tests workflow in kewalaka/avm-contributions seeks to answer two questions:

- Does the new module version work with existing examples?

I call this “Plan A”. - If you modify the calling code, can you apply the new version without a re-deployment?

I call this “Plan B”.

In some cases, both will fail, which will signal that more complex imports will be needed on the customer side.

It is possible to infer breaking changes by looking at variable changes, however for this use case I specifically wanted to test deployment behaviour, which picks up a broader range of issues.

How to trigger it

Since I am using this as part of my AVM Contributor AI agent, the workflow is repository_dispatch, so it can be triggered via API invocation.

You can simulate the call using the gh CLI command:

| |

In the above example, in the final parameter I’m passing the example to be tested (‘default’, in this case).

Omit the last line entirely and the workflow discovers every examples/*/main.tf and fans out one matrix job per example.

Summary report

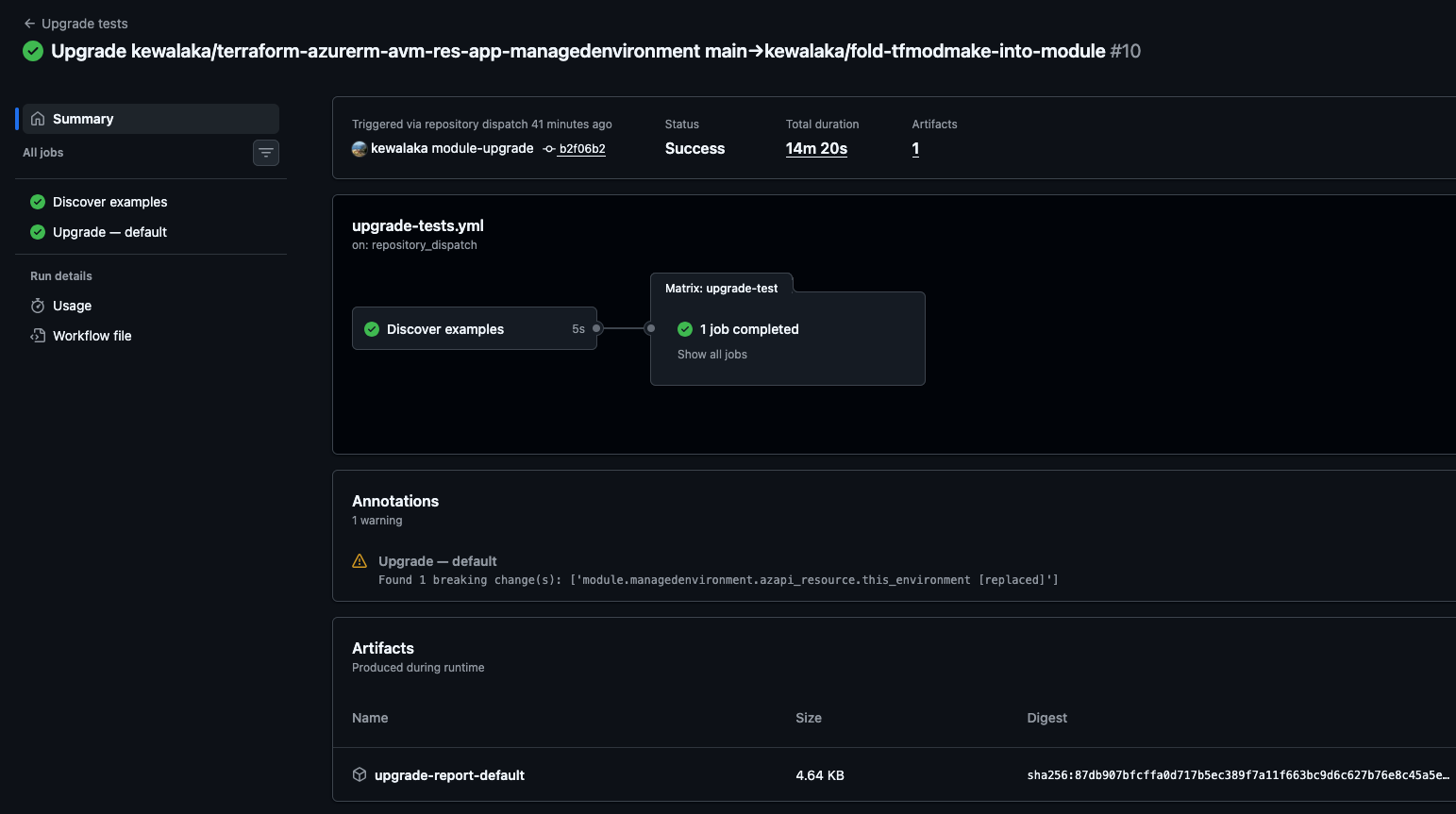

Once complete, any breaking changes are noted as Annotations on the summary report, and an artifact is created with details.

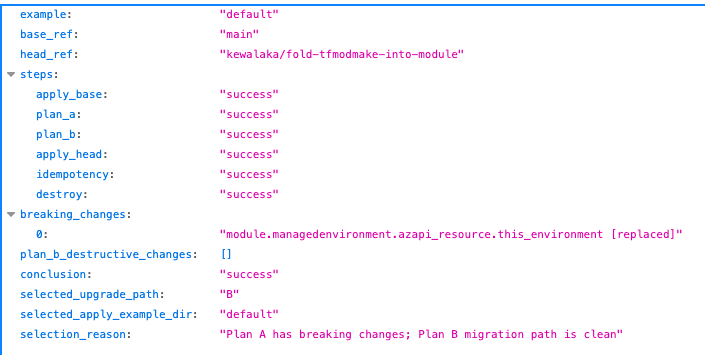

The summary report from the artifact looks like this, showing a single example for brevity:

In addition the artifact has a copy of the terraform plan from both Plan A and B.

Agent callback

When callback_repo is set, the workflow fires a ci-result repository_dispatch on completion:

| |

This is my hook back to the Contributor Agent, to investigate the success or failure of the run.

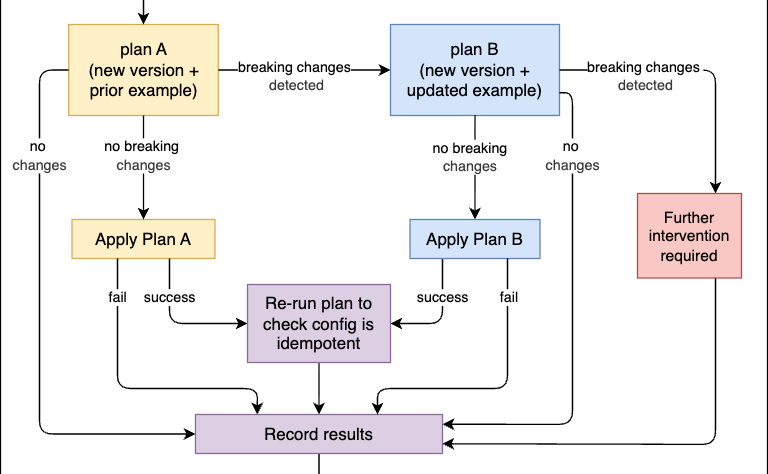

The upgrade flow

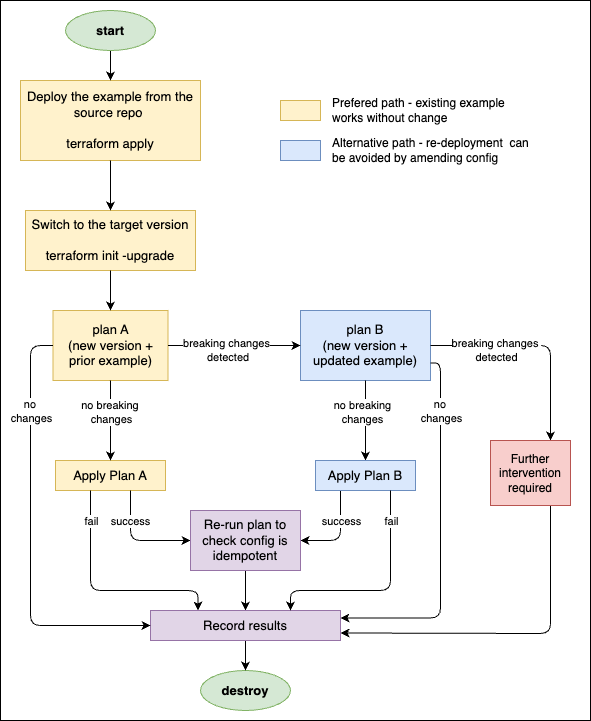

Plan A runs the new module code against the existing example configuration

Plan A is the breaking change test (for the example)

Plan B runs the new module code against the example from the new version

Plan B shows whether you can make changes to the calling attributes and avoid having to re-deploy.

If a non-breaking change is detected, an apply is executed, followed by a plan to check the apply is idempotent.

If the selected plan exits with code 0 — meaning Terraform compared real infrastructure against the configuration and found no differences — there is nothing to apply, then terraform apply and idempotency steps are automatically skipped.

The destroy step runs whenever any resources were deployed — a partial apply can still create real resources and we don’t want to leave these around costing money! It selects the right workspace to destroy from depending on where the process failed.

Prerequisites

A sample script is included in the repo to help generate these:

- A GitHub Actions

testenvironment with OIDC credentials (ARM_TENANT_ID,ARM_SUBSCRIPTION_ID,ARM_CLIENT_ID) - Federated identity configured for the

testenvironment scope - Optional:

AGENT_DISPATCH_TOKENsecret (PAT withcontents: writeon the callback repo) for CI result callbacks

Summary

In this post, I’ve demonstrated a deterministic way to test whether AVM modules upgrade successfully from one version to another, using real deployments to test for changes that static changes may miss.

Since this process runs outside the Azure organisation via repository dispatch, external contributors can use this to test modules as well.

You can also re-use this same process for your own modules too.

Perhaps it may even be useful to re-purpose within the AVM eco-system too, we’ll have to wait and see!

The upgrade workflow itself is live at kewalaka/avm-contributor-agent.